Modern businesses depend on continuous streams of high-quality data. Every department from analytics to marketing to finance needs reliable information delivered at the right time. When data pipelines slow down or fail, the entire workflow of a company can be affected. This is why etl process optimization has become a top priority for teams that deal with large or complex datasets. With the right strategies, companies can reduce delays, cut infrastructure costs, and significantly improve data accuracy.

Understanding the Foundation of ETL Workflows

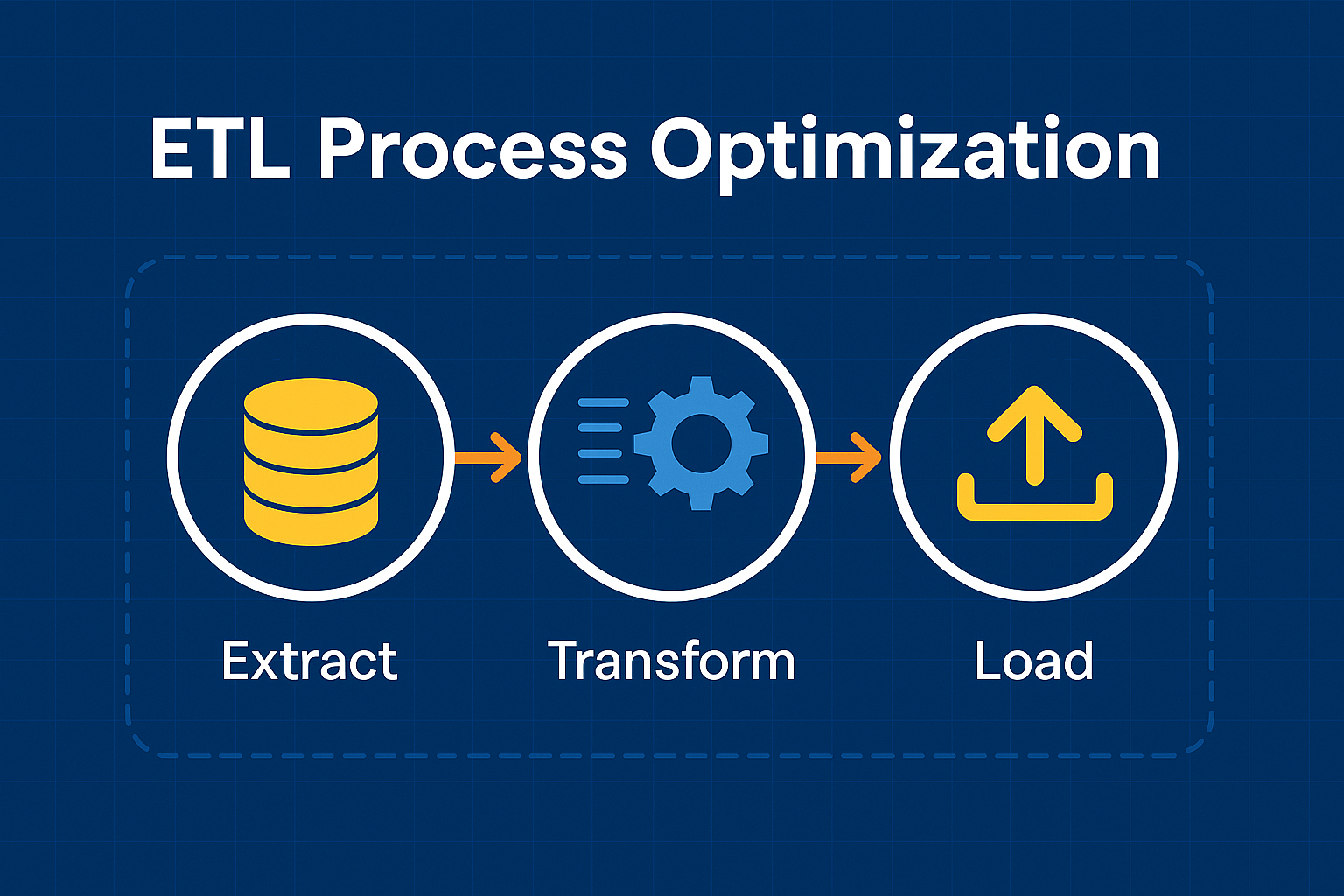

Before improving any workflow, it’s vital to understand how the process functions. ETL stands for Extract, Transform, and Load.

- Extract: Data is collected from multiple sources, including databases, APIs, and cloud storage.

- Transform: Data is cleaned, validated, and reshaped for analytical use.

- Load: The refined data is stored in a target destination such as a data warehouse or data lake.

Many teams face performance issues because their pipelines grow in complexity without structured planning. Clear architecture is the first step in successful etl process optimization.

Diagnosing Performance Bottlenecks Step-by-Step

You cannot fix what you cannot measure. Identifying bottlenecks requires a systematic review of the current ETL environment.

Common Issues Include:

- Slow extraction queries

- Heavy or repeated transformation logic

- Poor infrastructure allocation

- High network latency

- Inefficient load techniques

By setting up performance dashboards and using profiling tools, teams can identify the exact stages causing delays. This diagnostic phase guides all future improvements and ensures optimization efforts deliver measurable results.

Optimizing the Extraction Phase for Better Speed

Extraction problems are common because source systems are often not designed for high-volume data pulls. Improving this stage can drastically enhance pipeline performance.

Effective Optimization Techniques:

- Using incremental extraction instead of full refreshes

- Reducing load on source systems with read replicas

- Applying filtering to avoid pulling unnecessary data

- Increasing throughput with parallel extraction streams

When extraction is optimized correctly, the entire chain runs faster and consumes fewer resources. Extraction tuning is one of the most valuable components of etl process optimization.

Enhancing Transformation Logic for Efficiency

Transformations are often the most resource-intensive aspect of ETL pipelines. Complex calculations, joins, and validations can slow down the system if poorly implemented.

Ways to Improve the Transformation Phase:

- Replace row-by-row logic with set-based operations

- Push transformations to systems designed for computation, such as distributed SQL engines

- Cache lookup tables to reduce repeated queries

- Organize transformation scripts into modular, reusable components

Well-structured transformation logic not only speeds up processing but also makes long-term maintenance far easier.

Improving the Load Phase for Scalability

Loading data into a warehouse or analytical store may seem straightforward, but inefficiencies here can create major pipeline delays.

Best Practices for Load Optimization:

- Use bulk load operations for large datasets

- Partition tables for better query performance

- Apply indexing strategies after loading rather than before

- Schedule loads based on system usage to avoid conflict

Companies that optimize the load phase benefit from faster refresh times and reduced strain on storage systems. Strategic load design plays a significant role in full-spectrum etl process optimization.

Leveraging Automation to Reduce Manual Overhead

Manual intervention increases the risk of errors and slows down operations. Introducing automation frameworks ensures consistency and reliability.

Automation Opportunities Include:

- Error detection and retry mechanisms

- Automated job scheduling

- Pipeline health monitoring

- Auto-scaling compute resources

Well-designed automation allows teams to focus on high-value analytical tasks while maintaining dependable data flow.

Choosing the Right Tools and Infrastructure

Not all ETL tools perform equally. Choosing the right combination of technologies is crucial for long-term success.

Factors to Consider:

- Ability to scale with data growth

- Support for real-time ingestion

- Integration with cloud services

- Built-in performance tuning features

Many modern platforms offer advanced features that support etl process optimization, such as distributed processing, built-in caching, monitoring dashboards, and workflow orchestration.

Building a Culture of Continuous Optimization

Optimizing ETL is not a one-time effort. Data grows, business needs evolve, and workflows must adjust accordingly. Teams should implement a continuous improvement mindset, regularly reviewing performance and updating processes as needed.

Long-Term Success Strategies:

- Schedule quarterly pipeline audits

- Maintain detailed documentation

- Train team members on new tools and techniques

- Monitor cost-to-performance ratios

With ongoing evaluation and adaptation, companies ensure their pipelines remain efficient and future-ready.

More Details : Kialodenzydaisis Healing – A Complete Guide to its Power, Process, and Benefits

FAQs

1. What is the main goal of ETL process optimization?

The primary goal is to improve the speed, accuracy, and reliability of data pipelines while reducing resource consumption.

2. How often should ETL pipelines be optimized?

Pipelines should be reviewed every few months or whenever data volume or system architecture changes significantly.

3. Can automation tools help improve ETL workflows?

Yes, automation minimizes manual errors, speeds up processes, and enhances scalability.

4. What causes slow ETL performance?

Common causes include inefficient queries, heavy transformation logic, poor infrastructure allocation, and unoptimized loading processes.

5. Does ETL process optimization reduce costs?

Absolutely. Efficient pipelines use fewer compute resources, complete faster, and require less manual effort, all of which reduce operational expenses.